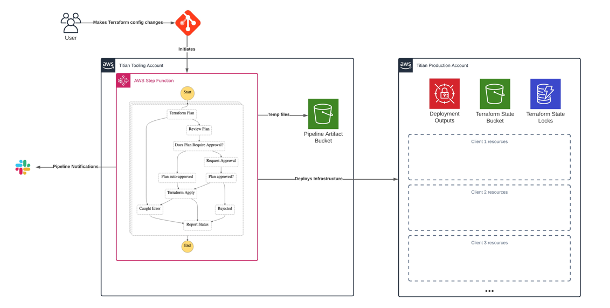

This solution needed two separate elements:-

The pipeline would need to ensure that future environments are managed effectively, but also be sympathetic to the existing environments and how they would need to be migrated and subsequently managed under the same deployment pipeline.

The first step of enabling full management of the environment via Terraform was to update the state configuration to use S3 bucket and DynamoDB. Within the S3 bucket Terraform state files are created for each client, this scopes the blast radius of any individual deployment to a single client and minimises the impact should any problems occur inside or outside of the Terraform state.

Secondly, in order to track the intended configuration for each client and have a trigger point for pipeline runs, Terraform configurations files for each client are also maintained in Git. This was configured using a separate repository purely for client Terraform configurations, with a different repository containing the generic, reusable modules.

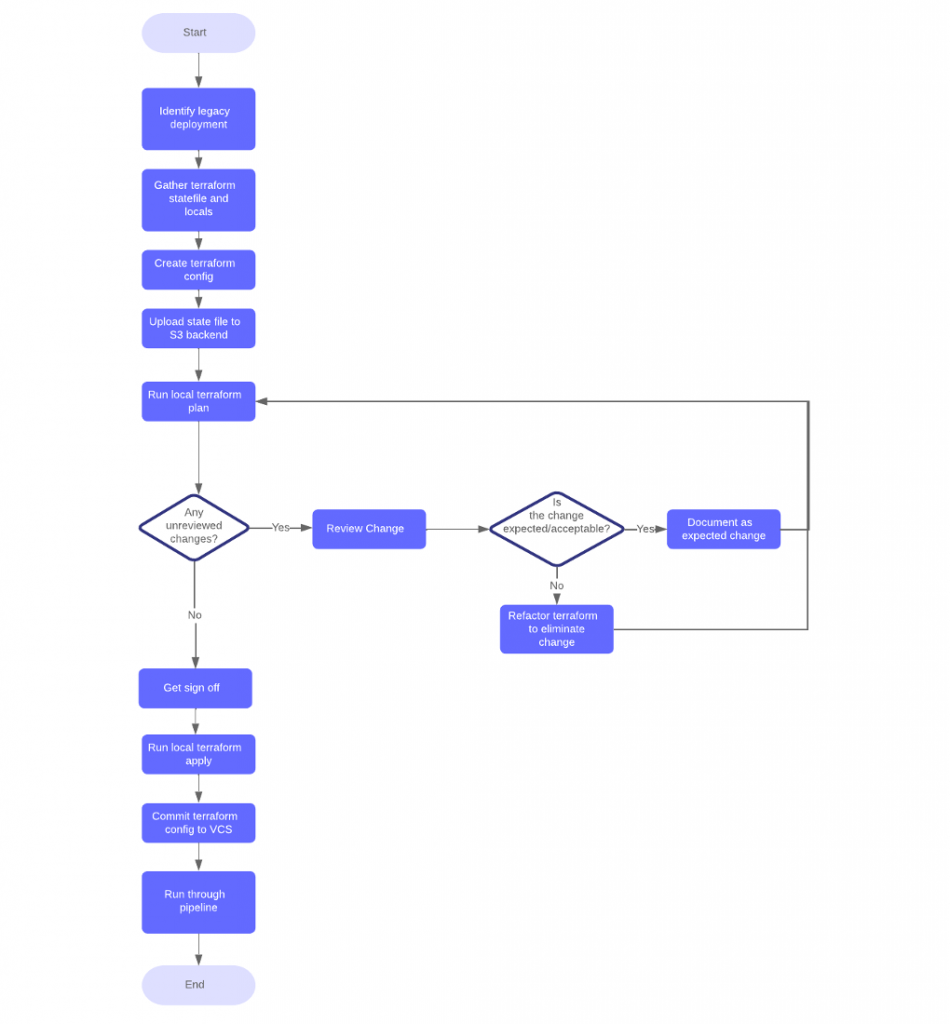

This flowchart details the activities discovered during the initial investigation, and subsequently ratified into the High Level workflow for the migration of each legacy client deployment to the pipeline.

The Terraform testing/refactoring was performed locally for speed of development, then committed to the version control system after customer approval. Once committed it was then automatically and carried through the pipeline to complete the migration. Future environment changes would be handled via changes committed to the Terraform repository which would launch the pipeline.

As a prerequisite for this process it is required to have the tf state file and local variables used for the initial deployment. The local variables are combined with a generic set of Terraform config files to become the basis of the new deployment. The state file is stored in S3 where it is accessible from the pipeline.

Plantation Place South, 1st Floor

60 Great Tower Street

London, EC3R 5AZ

United Kingdom

5th Floor, Strawinskylaan 4117

1077 ZX

Amsterdam

Netherlands

14 Rue du Rhône 1204

Geneva

Switzerland